This post was inspired by the discussion about the value of research in legal academia at Workplace Prof Blog. In the comment section of the linked post you’ll see that on the one side of the issue we have individuals like Mitchell Rubenstein arguing that “teaching is only of secondary importance” to today’s legal professoriate. On the other side you’ll see rejoinders like that by Howard Wasserman arguing that research compliments teaching and in fact good researchers are good teachers and vice versa.

This strikes me as an empirical question, but one for which I have access to very little good quality data. Nonetheless, in the spirit of summer blogging, below are the results of a non-scientific analysis of the relationship between the research output of scholars at my own school (Northwestern Law) and their subjective ratings at www.ratemyprofessors.com.

The dependent variable of concern here is the overall rating for each professor. There are a boatload of caveats that go along with these ratings. We have to assume there is selection bias at work, they’re likely scale variant, and there aren’t very many of them. That said, they’re all I have. I’m sure (that is to say I hope) that schools model this sort of thing with their higher quality student assessment data. I found ratings for 14 traditional and 5 adjunct or clinical law faculty at NU (I excluded legal writing faculty from the analysis). I then used Thomson-Reuters Web of Science to determine how many publications each professor had and how many citations each had received. Checking CVs to determine when each professor began teaching allowed me to calculate the rate of publications per year and annual impact (citations per publication per year).

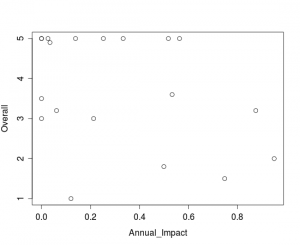

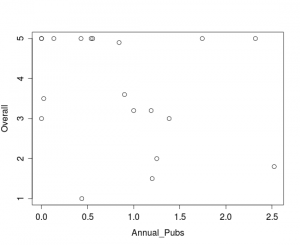

One of the arguments against research is that it distracts professors from teaching duties. The focus on producing articles that likely hold little interest for law students – or anyone outside of a relatively narrow area of legal research – leaves less time for professors to focus on teaching. The plot below shows that – at least in this limited dataset – there is virtually no meaningful relationship between the number of articles produced per year and a professor’s overall student rating.

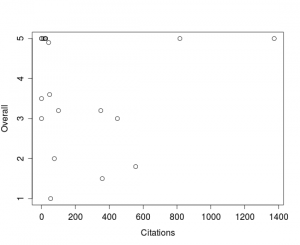

On the other hand, some might argue that those who are particularly good at research also excel at teaching. But, plotting the overall student rating against the annual impact variable shows no relationship at all between a professor’s ability to produce high impact research and the ratings they get from students.

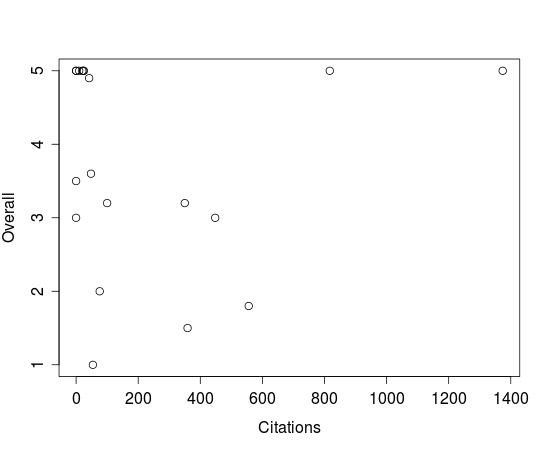

Alternately, one might claim that it isn’t a professor’s rate of citation but their overall impact that relates to teaching quality. The plot here suggests that – if we had more data – we might indeed find some sort of positive relationship between total citations and ratings. Unfortunately, there just isn’t enough here to work with to be confident making claims one way or the other.

Alternately, one might claim that it isn’t a professor’s rate of citation but their overall impact that relates to teaching quality. The plot here suggests that – if we had more data – we might indeed find some sort of positive relationship between total citations and ratings. Unfortunately, there just isn’t enough here to work with to be confident making claims one way or the other.

Ultimately, due in part to the limits of the dataset, we don’t see clearly whether research output correlates with either good or bad teaching reviews – in fact if I had to take a position, this data would lead me to conclude there is no significant relationship either way. That said, what we do see is that data can help us figure this out. There is no shortage of people adamantly arguing either for or against the importance of research in legal academia. Unfortunately the arguments too often boil down into unsourced assertions and false dichotomies. A good empirical treatment of the question would help us all better understand how research relates to teaching quality. I don’t personally have the time to assemble data for professors from more schools but I would be happy to share the little that I have or analyze any data that others might put together.

Very interesting. I would think that everyone at NU would be a pretty good to outstanding teacher. I’d think there may be a narrow difference in quality of instruction. Might that obscure whatever relationship there is? Also, I’m wondering if age has something to do with this? Not sure which way, but we probably should be comparing people at equal points in their careers.

You’re right. The ratings data I have are strongly left-skewed. Hopefully a bigger dataset would help address that. I noticed in the article mentioned by AnonProf over at Workplace Prof Blog (Are Scholars Better Teachers) that they seem to have dealt with the non-normal distribution by categorizing ratings as above or below the mean.

An age relation analysis would definitely be interesting. I imagine education scholars have done studies tracking the student ratings professors receive over time. I’d be curious to see what the curve looks like for law professors.